Nearly half of in-house legal professionals say they would not detect an unauthorized or incorrect action taken by an AI agent until after it had already occurred — sometimes days or weeks later — according to new survey research released today by Icertis, the contract lifecycle management company.

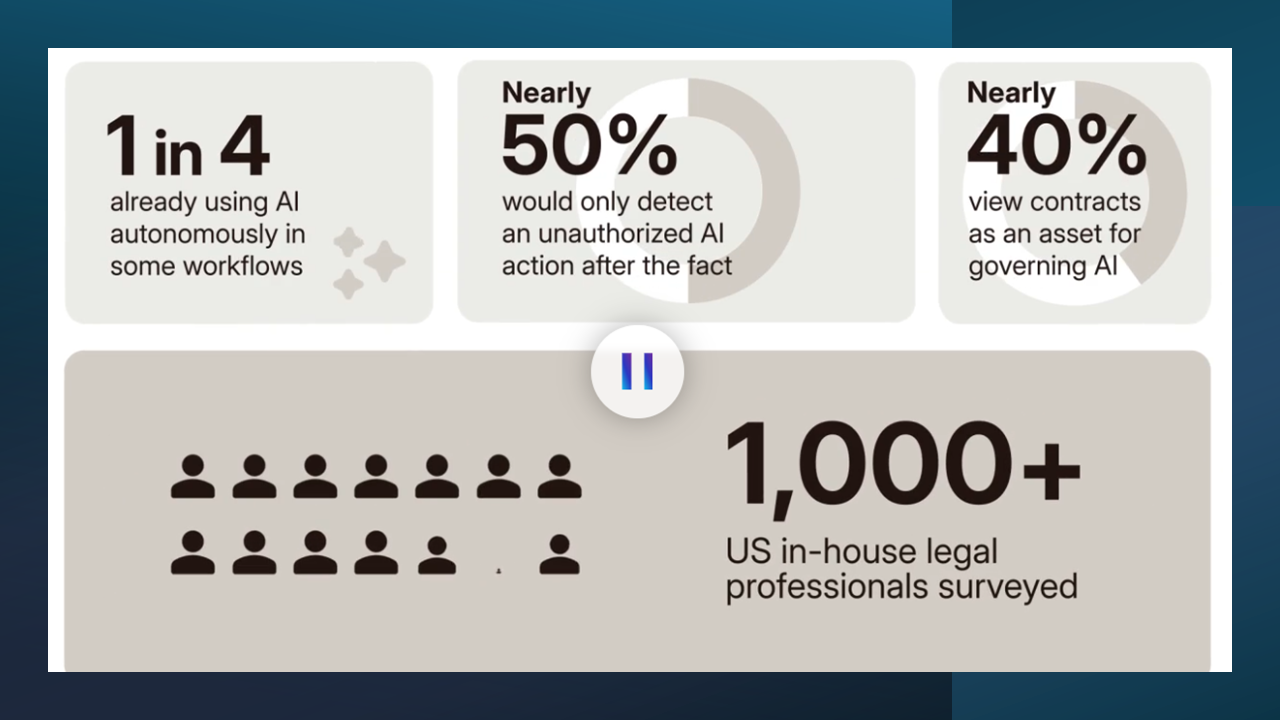

The findings, drawn from a survey of more than 1,000 U.S. corporate legal practitioners, point to a governance gap that the company argues has emerged as AI tools have grown more autonomous.

While the vast majority of respondents said they primarily use AI in an assistive capacity, roughly one in four said AI occasionally handles tasks on its own, and for nearly 10% of respondents, human review of AI activity is already the exception rather than the rule.

Despite that growing autonomy, only about 23% of legal teams said they have a comprehensive, documented agentic AI policy in place, and only 34% said their existing general AI policies adequately cover agentic use cases. About 60% expressed confidence that their policies would be ready to govern AI agents within the next 12 to 24 months.

Accuracy concerns compound the governance challenge. Only 26% of respondents said they were very confident that the AI their team uses is accurate enough for high-stakes business decisions, and roughly half said they must apply human judgment before trusting AI-generated outputs.

The survey also found fragmented visibility into AI activity. About 39% of respondents said they are confident in real-time visibility into their AI agents’ actions, while an equal share said they would likely catch a problem — but only after the fact. Eight percent said an AI action could go undetected for days or weeks.

On the question of accountability when AI makes a mistake, responses were split nearly evenly: 23% said responsibility would fall on the team that deployed the agent, 23% said the team managing its day-to-day operations, and 22% said it would depend on the circumstances. Only 10% said legal would bear accountability when compliance is compromised — even though 35% said legal is the primary owner of AI usage policies.

The survey also flagged concerns about data connectivity. More than 70% of respondents said their teams use generic large language models — tools like ChatGPT or Claude — in their legal work, with 65% using those tools directly on contracts. Only 17% said their AI tools both send and receive data from other business systems, while 23% said their AI data stays entirely within legal systems.

Icertis, whose platform is built around contract intelligence and management, frames the problem in terms of a solution it is positioned to offer: contracts as a governance layer. The report argues that contract data can give AI agents the business context needed to act accurately, and that only 38% of legal teams currently view contracts as a tool for governing AI — though another 32% said they see the potential.

“The speed of AI innovation is outpacing the governance meant to oversee it,” said Bernadette Bulacan, chief evangelist at Icertis, “and legal is feeling this pressure on two fronts: with the use of AI agents in their own department, and through increasing usage by other functions.”

The full report is available on the Icertis website.

Robert Ambrogi Blog

Robert Ambrogi Blog